We're not Reddit marketing experts. We're a B2B GTM team that kept seeing the same thing from different angles: buyer conversations on Reddit that weren't happening anywhere else, AI answers citing Reddit threads over vendor content, and data suggesting the channel deserves more attention than most B2B teams give it.

This is Part 1 in a series where we evaluate Reddit as a B2B GTM channel. This piece covers why we think it deserves serious consideration. Part 2 gets into the practical side: how to actually show up without getting ignored or banned.

Where your buyers are actually talking

Reddit is one of the most-visited websites in the United States, with 121.4 million daily active users as of Q4 2025. It's where your buyers go when they want an honest answer, not a vendor blog. A peer conversation with someone who's used the product and has no reason to sell them anything.

The modern B2B buying journey is largely self-directed. Buyers complete most of their research before they engage with sales, and they're turning to communities where the answers aren't sponsored. According to Reddit's advertising materials citing Comscore data from June 2025, 61% of B2B decision-makers are active on Reddit, and 38% of those buyers aren't on LinkedIn at all. Reddit also cites that 72% of tech decision-makers use the platform for peer reviews and 49% for active product research. (These figures come from Reddit's own marketing, so take them with appropriate context.)

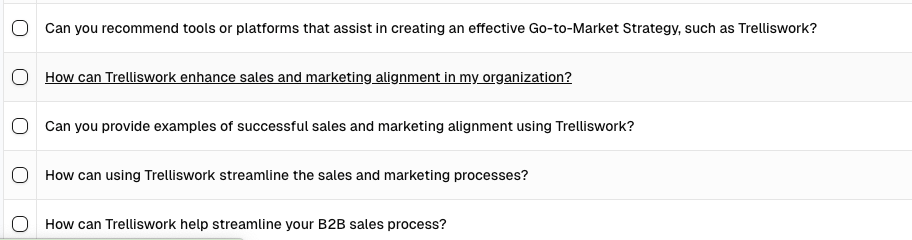

The intent is different from other platforms. When someone types a question into r/marketing or r/revops, they're looking for an answer to a specific problem. That research posture makes Reddit one of the highest-intent channels in B2B. The smaller, more focused communities like r/revops, r/CRM, and r/marketingops consistently carry more purchase intent per conversation than the large ones.

What's possible on Reddit

Reddit supports more than comments: long-form posts, live AMAs, newsletter distribution, and paid advertising. The posts that drive engagement are specific, backed by data, and written from direct experience. The model is to publish the kind of content your marketing team usually saves for gated assets and give it away for free.

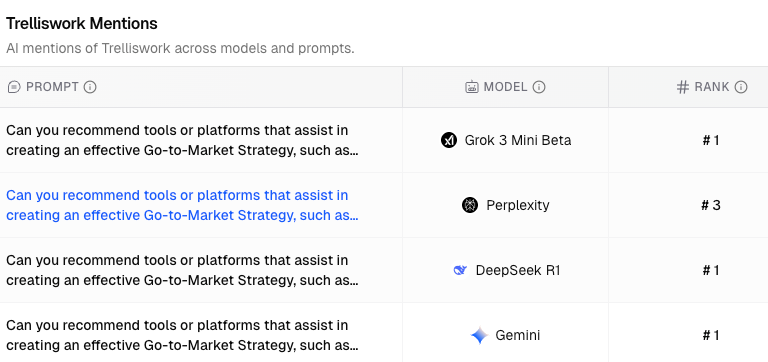

Comments are probably the most underrated GTM activity on the platform. When a thread in r/SaaS asks "what tool does your team use for X?", that's a public buying signal. A useful, honest response gets upvoted, indexed by Google, and cited by AI systems for years. The challenge is volume. It gets overwhelming fast. Tools like Reddit Pro help by surfacing threads that match your target language so you're responding to the ones that matter, not trying to read everything.

The format that works: give a concrete answer first, cover tradeoffs honestly, and end with a soft mention — "we cover more of this in our newsletter if you want to go deeper." Value first, link last.

On the paid side, Reddit offers subreddit-level targeting that puts your message in front of communities defined by professional interest. CPCs run 50-70% lower than LinkedIn for comparable B2B audiences ($0.50-$2.00 vs. $7-$12). Reddit's ad business grew 74% year-over-year in Q3 2025, reaching $2.2 billion in total annual revenue. That cost gap won't hold forever.

Does Reddit content age better?

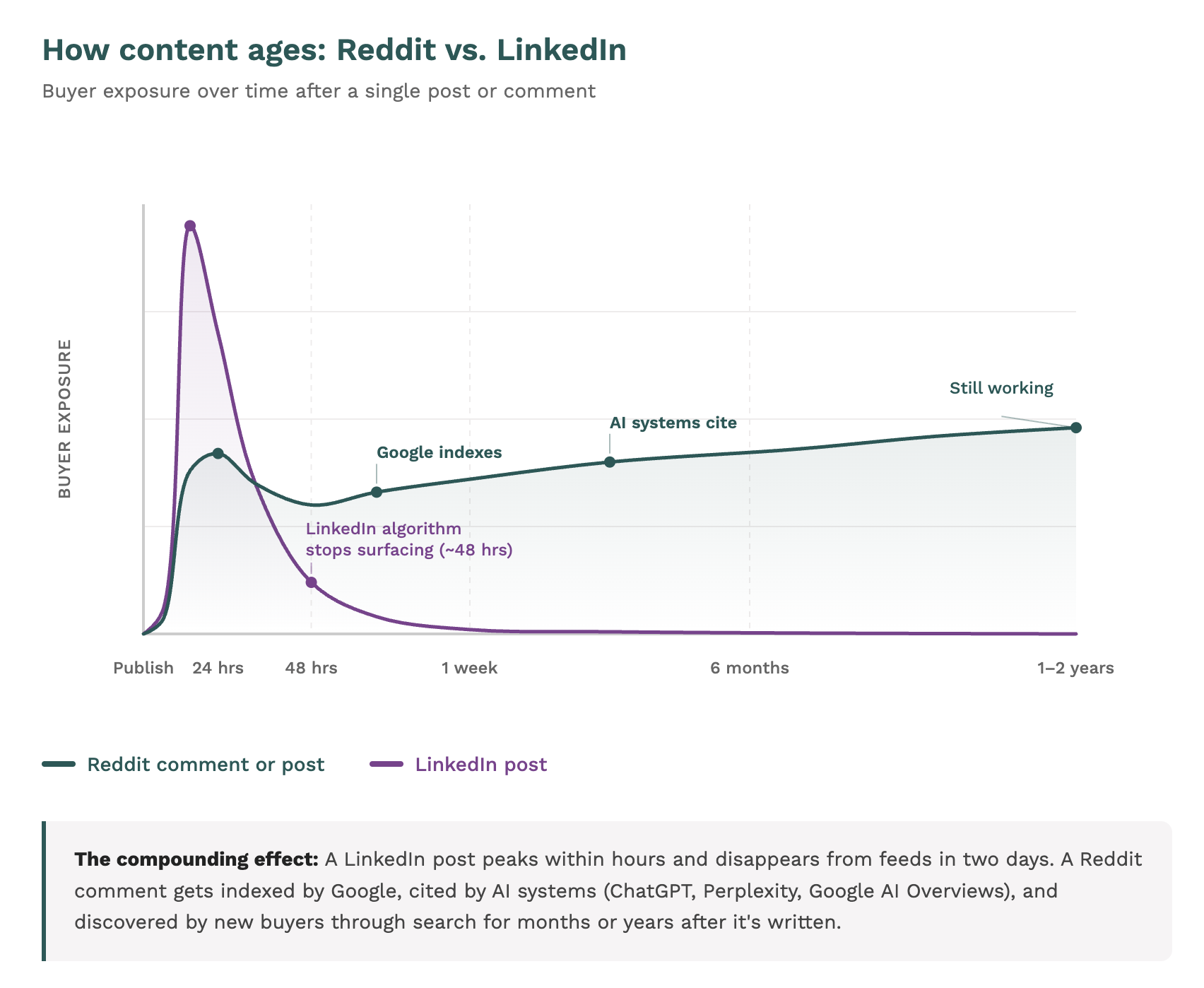

A LinkedIn post has a shelf life of 24 to 48 hours. A Reddit comment can rank in Google for years and gets pulled into AI-generated answers indefinitely. According to Semrush's analysis of 150,000+ AI citations, Reddit is the most-cited source across major AI platforms including ChatGPT, Perplexity, and Google AI Overviews.

Google has increased Reddit's visibility in search results. Threads now regularly rank on the first page for B2B software comparisons. Tailscale's Reddit engagement, built through months of technical participation with no promotional agenda, has produced more than 1,300 subreddit discussions ranking in Google search results and thousands of monthly referral visits. All organic, no ad spend.

For organic GTM, the asset doesn't depreciate when you stop spending.

The trust factor

Buyers know the difference between a case study written by the company and a peer recommendation from someone with no stake in the outcome. According to Reddit's own research (a July 2024 survey of 1,250 business decision-makers), 90% of Reddit users trust the platform to learn about new products and 74% say it influences their purchasing decisions. These are Reddit's numbers, not independent research, but the directional signal is hard to ignore.

When someone from your team shows up in r/revops and gives a useful answer, it reads like expertise, not advertising. The companies doing this well treat Reddit as a trust-building channel that makes every other part of their funnel work better.

Companies worth studying

Shopify built r/Shopify into a community hub with over 274,000 members. Team members participate across multiple subreddits without a promotional agenda. The community now generates its own brand advocacy and Google-ranked discussions without Shopify having to push it.

Tailscale built credibility in r/sysadmin, r/devops, and r/networking — communities hostile to vendor promotion — by answering technical questions with no product mention for months. Organic recommendations followed from community members, not from Tailscale itself.

How would you even measure this?

Standard attribution can't capture how Reddit works. Most influence happens through passive consumption. Someone reads a comment, closes the tab, and books a demo three weeks later through branded search. The Refine Labs research on dark social documented a 90% gap between software-attributed and self-reported revenue. Reddit sits firmly in that gap.

There's also a built-in tension. Your instinct is to wire everything up: UTM codes, tracked URLs, attribution pixels. But Reddit punishes that. The community can smell a tracked link, and the more you optimize for measurement, the less your engagement looks human.

The most reliable approach is also the simplest. Add a free-text field (not a dropdown) to conversion forms asking how the person heard about you, and train reps to ask the same question on calls. "I saw your comment in r/revops about attribution" tells you more than any dashboard. Also use extended attribution windows. Reddit's influence typically materializes 60 to 90 days after first contact.

Is the window closing?

Most competitors haven't built a Reddit presence yet. The ones who are there often show up wrong, treating it like a broadcast channel. Reddit rewards real expertise and penalizes anything that feels like advertising. The bar for differentiation is still low.

The AI citation advantage, the search visibility, the buyer trust. It's all still available in most B2B categories. The cost gap between Reddit and LinkedIn won't hold forever, and the time to start building is before your competitors figure out the same thing.

So what next?

The harder part is knowing how to actually show up without getting ignored, downvoted, or banned. Which accounts to use, which subreddits to prioritize, how to write a comment that earns trust, and how to build a system your team can maintain. That's what we'll cover in Part 2.

.jpg)

.jpg)